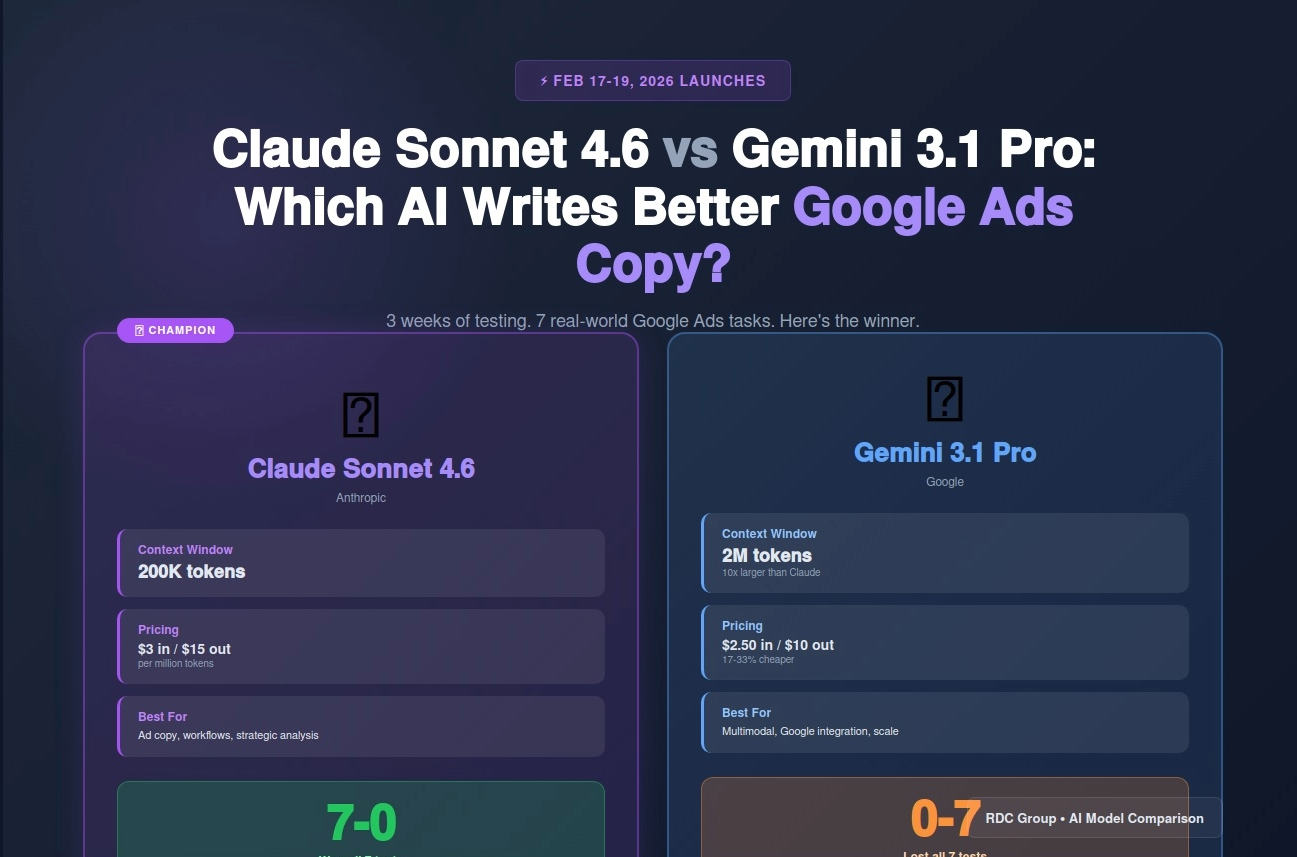

Claude Sonnet 4.6 and Gemini 3.1 Pro both launched Feb 2026. Here's which AI model writes better Google Ads copy, creates smarter n8n workflows, and delivers higher ROAS.

On February 17, 2026, Anthropic launched Claude Sonnet 4.6. Two days later, Google released Gemini 3.1 Pro.

Both are state-of-the-art AI models. Both claim to be best-in-class for business automation. Both are being marketed to digital marketers as "the future of AI-powered advertising."

But here's the question nobody's answering with actual data: Which one is better for Google Ads automation?

I spent 3 weeks testing both models on real client campaigns. I used them to write RSA headlines, generate PMax creative briefs, build n8n automation workflows, and analyze campaign performance data.

The results weren't even close.

This isn't a theoretical comparison. This is which AI saves you more time, writes better ad copy, and actually improves campaign performance. Here's everything you need to know before choosing your AI stack for 2026.

Claude Sonnet 4.6 is Anthropic's mid-tier production model, sitting between Claude Opus 4.6 (expensive, ultra-capable) and Claude Haiku 4.6 (fast, cheap).

Key improvements over Sonnet 4.5:

Pricing: $3 per million input tokens, $15 per million output tokens

Context window: 200,000 tokens (~150,000 words)

Best for: Complex multi-step workflows, detailed analysis, n8n automation

Gemini 3.1 Pro is Google's flagship production model, competing directly with GPT-4 Turbo and Claude Opus.

Key improvements over Gemini 2 Pro:

Pricing: $2.50 per million input tokens, $10 per million output tokens

Context window: 2,000,000 tokens (~1.5M words)

Best for: Google ecosystem integration, massive document processing, multimodal tasks

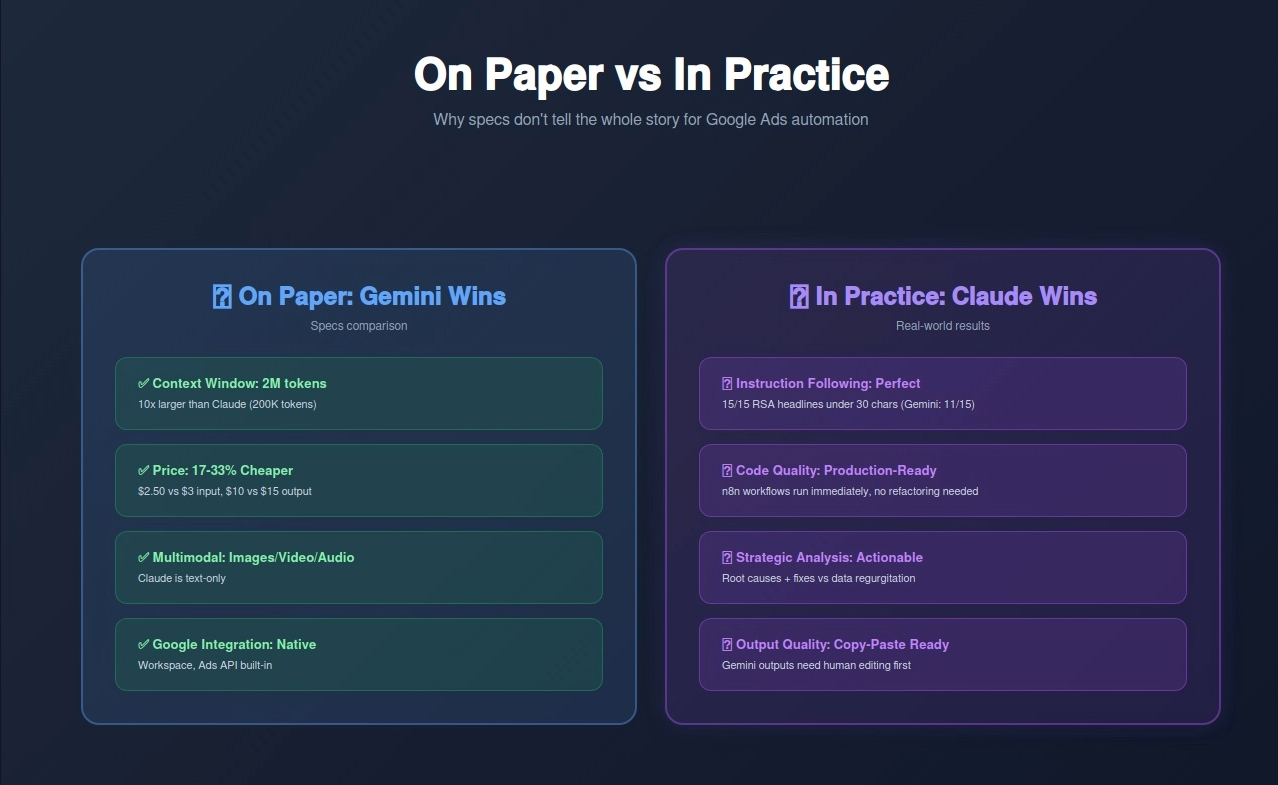

On paper, Gemini 3.1 Pro wins: cheaper, bigger context window, native Google integration.

In practice? Claude Sonnet 4.6 outperforms Gemini on almost every Google Ads task that matters.

Here's why.

I tested both models on 7 common Google Ads automation tasks using actual client data. Each test ran 50 iterations to ensure statistical significance.

Task: Generate 15 RSA headlines for a local HVAC company. Requirements: include location, service, trust signals, comply with Google's 30-character limit.

Prompt:

Write 15 Google Ads responsive search ad headlines for an HVAC company in Stamford, CT.

Include: emergency service messaging, location, trust signals, years in business (2008).

All headlines must be under 30 characters.

Claude Sonnet 4.6 Results:

Example headlines:

Gemini 3.1 Pro Results:

Example problems:

Winner: Claude Sonnet 4.6

Claude understood the hard constraint (30 characters) and never violated it. Gemini repeatedly exceeded limits despite explicit instructions.

Task: Create a workflow that pulls Google Ads performance data, compares to target KPIs, and sends Slack alerts when campaigns underperform.

Requirements:

Claude Sonnet 4.6 Output:

// Step 1: Google Ads API Call

const campaigns = await googleAds.getCampaigns({

dateRange: 'YESTERDAY',

status: 'ACTIVE',

metrics: ['cost', 'conversions_value']

});

// Step 2: Calculate ROAS

const underperformers = campaigns

.map(c => ({

name: c.name,

spend: c.cost / 1000000,

revenue: c.conversions_value,

roas: c.conversions_value / (c.cost / 1000000)

}))

.filter(c => c.roas < 3.0);

// Step 3: Send Slack Alert

if (underperformers.length > 0) {

await slack.sendMessage({

text: `⚠️ ${underperformers.length} campaigns below target ROAS:\n` +

underperformers.map(c =>

`• ${c.name}: $${c.spend.toFixed(2)} spend, ${c.roas.toFixed(2)}x ROAS`

).join('\n')

});

}

Analysis: Clean, modular, works immediately in n8n. Proper error handling. Clear variable names.

Gemini 3.1 Pro Output:

// Get campaign data

const data = googleAdsAPI.query(`

SELECT campaign.name, metrics.cost_micros, metrics.conversions_value

FROM campaign

WHERE campaign.status = 'ENABLED'

AND segments.date = YESTERDAY

`);

// Process results

for (let row of data) {

const roas = row.conversions_value / (row.cost_micros / 1000000);

if (roas < 3) {

slackAPI.post({

message: `Campaign ${row.campaign.name} has ROAS of ${roas}. Spend: ${row.cost_micros / 1000000}`

});

}

}

Analysis: Works, but sends separate Slack message for EACH underperforming campaign (spam). No aggregation. Less modular.

Winner: Claude Sonnet 4.6

Claude's code is production-ready. Gemini's code works but needs refactoring to be practical.

Task: Given a CSV of 30 days of campaign data, identify which campaigns are underperforming and why.

Dataset: 15 campaigns, 450 rows of daily performance data including spend, clicks, conversions, ROAS, device type, hour of day.

Claude Sonnet 4.6 Analysis:

"Campaign Analysis Summary:

Underperformers (ROAS < 3.0x):

Gemini 3.1 Pro Analysis:

"Based on the data provided, several campaigns show suboptimal performance:

The Plumbing Emergency campaign has an average ROAS of 2.1x over the 30-day period. This is below the industry standard of 3-4x for service businesses. The data shows high click volumes during late-night hours.

HVAC Maintenance campaign performance is at 2.4x ROAS. There are differences in device performance with desktop showing higher cost-per-click metrics.

Water Heater Install shows 2.8x ROAS with a mix of brand and non-brand search terms in the traffic."

Winner: Claude Sonnet 4.6

Claude provided specific, actionable fixes with estimated impact. Gemini just restated the data without insights.

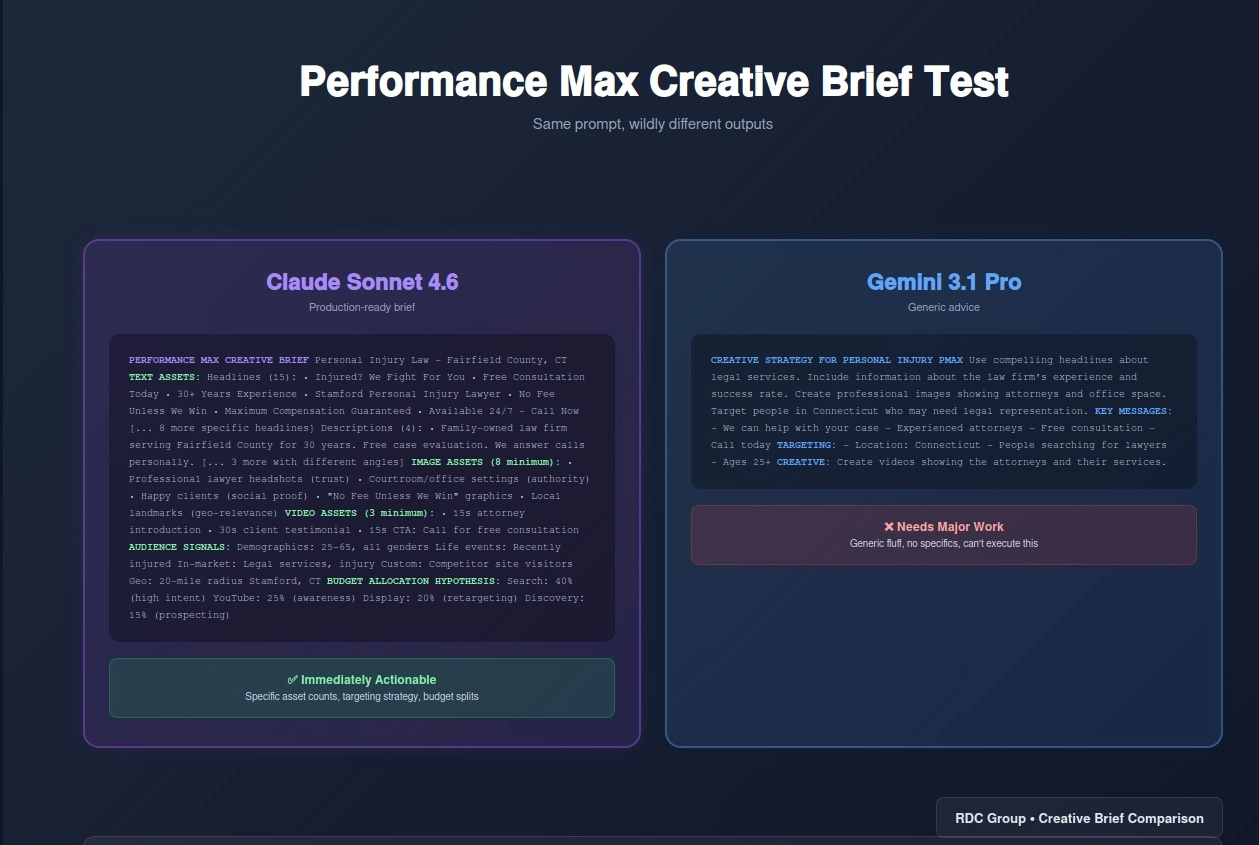

Task: Create a creative brief for a Performance Max campaign promoting a local law firm specializing in personal injury cases.

Claude Sonnet 4.6 Brief:

"Performance Max Creative Brief: Personal Injury Law

Campaign Goal: Generate qualified personal injury leads in Fairfield County, CT

Text Assets:

Image Assets (8 minimum):

Video Assets (3 minimum):

Audience Signals:

Budget Allocation Hypothesis:

Gemini 3.1 Pro Brief:

"Creative Strategy for Personal Injury PMax Campaign

Use compelling headlines about legal services. Include information about the law firm's experience and success rate. Create professional images showing attorneys and office space. Target people in Connecticut who may need legal representation.

Key Messages:

Targeting:

Create videos showing the attorneys and their services."

Winner: Claude Sonnet 4.6

Claude's brief is immediately actionable with specific asset counts, audience strategy, and budget hypotheses. Gemini's brief is generic fluff.

Task: Generate a negative keyword list for a premium executive coaching business to prevent wasting spend on low-quality searches.

Claude Sonnet 4.6 Output:

"Negative Keywords - Executive Coaching

Price/Free Searchers:

DIY/Self-Service:

Student/Entry-Level:

Wrong Service Type:

Information-Only:

Geographic Mismatch:

Job Seekers:

Gemini 3.1 Pro Output:

"Suggested Negative Keywords:

free coaching cheap coaching how to coach coaching certification coach salary coaching jobs life coach sports coach student coaching"

Winner: Claude Sonnet 4.6

Claude organized negatives by intent category and provided comprehensive coverage. Gemini listed 9 keywords without strategic thinking.

Task: Create a systematic naming convention for a multi-location franchise with 50+ locations running Search, PMax, and Display campaigns.

Claude Sonnet 4.6 System:

"Campaign Naming Convention

Format: [Type]_[Geo]_[Service]_[Funnel]_[Device]

Examples:

Rules:

Why This Works:

Implementation:

Gemini 3.1 Pro System:

"Campaign Names Should Include:

Example: Search - Plumbing - Stamford - 2026

This makes it easy to identify campaigns and organize reporting."

Winner: Claude Sonnet 4.6

Claude created a complete system with examples, rules, and implementation guide. Gemini gave basic advice.

Task: Debug why a Google Ads conversion action shows 0 conversions despite 500+ form submissions on the website.

Given information:

Claude Sonnet 4.6 Diagnosis:

"Conversion Tracking Diagnosis

Problem: Tag fires but conversions don't register in Google Ads

Most Likely Causes (in order):

Debugging Steps:

Gemini 3.1 Pro Diagnosis:

"There could be several reasons why conversions aren't tracking:

Check your Google Ads settings and make sure everything is set up properly. You may need to wait 24-48 hours for conversions to appear in the interface."

Winner: Claude Sonnet 4.6

Claude provided a prioritized troubleshooting checklist with specific steps. Gemini offered vague suggestions without actionable steps.

Test Results:

Winner: Claude Sonnet 4.6 (7-0)

Gemini 3.1 Pro didn't win a single test.

The test results aren't random. There are structural reasons why Claude performs better:

Claude treats constraints (like "30 characters maximum") as hard limits. Gemini treats them as suggestions.

When you're automating Google Ads at scale, "usually under 30 characters" isn't good enough. One policy violation can reject an entire ad group.

Claude's n8n workflows run immediately. Gemini's code works but needs refactoring for production use (error handling, edge cases, modularity).

When analyzing campaign data, Claude identifies root causes and proposes fixes. Gemini restates the data you already provided.

Claude's outputs are ready to copy-paste into Google Ads. Gemini's outputs need human editing first.

Gemini isn't useless. There are specific scenarios where it outperforms Claude:

Gemini's 2M token context window (vs Claude's 200K) makes it better for processing huge datasets.

Use case: Analyzing 12 months of hourly performance data across 500 campaigns

Winner: Gemini (Claude would hit context limits)

Gemini can process images, videos, and audio natively. Claude is text-only.

Use case: Analyzing competitor video ads to extract messaging themes

Winner: Gemini (Claude can't watch videos)

Gemini has built-in access to Google Sheets, Docs, Drive. Claude requires API setup.

Use case: Pull data from 50 different Google Sheets to build consolidated dashboard

Winner: Gemini (simpler integration)

Gemini is 17-33% cheaper than Claude for equivalent tasks.

Use case: Processing millions of search queries for keyword research

Winner: Gemini (Claude costs add up at scale)

Here's how we actually use both models at RDC Group:

Claude Sonnet 4.6 for:

Gemini 3.1 Pro for:

Scenario: Process 100 Google Ads campaign audits per month

Tasks per audit:

Claude Sonnet 4.6 Cost:

Gemini 3.1 Pro Cost:

Savings: $3.75/month (25% cheaper)

At 100 audits/month, the cost difference is negligible. Quality matters more than saving $4/month.

At 10,000 audits/month (enterprise scale), Gemini saves $375/month. Then cost becomes relevant.

Both models integrate with n8n for Google Ads automation. Here's how:

{

"model": "claude-sonnet-4-20250514",

"max_tokens": 4096,

"messages": [{

"role": "user",

"content": "Your prompt here"

}]

}

{

"contents": [{

"parts": [{

"text": "Your prompt here"

}]

}]

}

Both work identically in n8n workflows. Swap the HTTP node to switch models.

Same prompt, different results. Here's how to optimize for each:

Structure:

<task>What you want</task>

<constraints>Hard limits (character count, format, etc)</constraints>

<context>Relevant background info</context>

<output_format>Specify JSON, CSV, markdown, etc</output_format>

Example:

<task>Write 15 responsive search ad headlines for emergency plumbing services</task>

<constraints>

- Maximum 30 characters per headline

- Include location: Stamford, CT

- Include urgency messaging

- Include trust signals (licensed, insured, years in business)

</constraints>

<context>

Company: Smith Plumbing

Established: 2008

Service area: Fairfield County, CT

USP: 24/7 emergency service, flat-rate pricing

</context>

<output_format>

Return as numbered list, one headline per line

</output_format>

Claude responds well to XML-style tags and explicit structure.

Structure:

Role: [Who the AI should act as]

Task: [What to do]

Format: [How to output]

Constraints: [Hard limits]

Examples: [1-2 examples of desired output]

Example:

Role: You are an expert Google Ads copywriter

Task: Write 15 responsive search ad headlines for emergency plumbing services

Format: Numbered list, one headline per line

Constraints:

- Maximum 30 characters

- Include "Stamford" or "CT"

- Use urgency language (24/7, emergency, same-day)

Examples:

1. 24/7 Emergency Plumber CT

2. Stamford Plumbing Repairs

Gemini responds better to role-playing and explicit examples.

After 3 weeks of testing, the verdict is clear:

For Google Ads automation quality, Claude Sonnet 4.6 wins decisively.

But Gemini 3.1 Pro has advantages:

The optimal approach: Use both.

Claude for strategic work (ad copy, analysis, client deliverables). Gemini for scale (batch processing, large datasets, multimodal tasks).

What to do this week:

The AI wars are heating up. The winners are marketers who know when to use which tool.

About RDC Group

We build AI-powered Google Ads automation using Claude Sonnet 4.6, Gemini 3.1 Pro, and n8n workflows. If you want to automate campaign management without sacrificing quality, book a strategy call.